Talend vs. Informatica vs. Fivetran vs. dbt: The Data Integration and ETL Comparison for IT Leaders (2026)

Talend, Informatica, Fivetran, and dbt compared for IT leaders in 2026. Architecture, pricing, MDM, governance, and real-world tradeoffs, plus the impact of Salesforce's acquisition of Informatica and the Fivetran + dbt merger.

Key Vendor Changes: Acquisitions and Mergers Affecting Your 2025–2026 Evaluation

Before the feature breakdown, three structural changes define the 2026 evaluation context.

Qlik acquired Talend in 2023, rebranding it as Qlik Talend. Talend's free Open Studio product was discontinued in January 2024, a forced migration for a large portion of its user base.

Salesforce acquired Informatica in an $8 billion deal that closed on November 18, 2025. INFA was delisted from NYSE. Informatica is now a wholly owned Salesforce subsidiary, a material change in vendor risk that belongs in every procurement evaluation.

Fivetran and dbt Labs announced a merger in October 2025 in an all-stock deal. Combined ARR is approximately $600M. Both products continue independently for now, but they are effectively the same company's roadmap, and that roadmap is positioning directly against Talend and Informatica.

The Core Architectural Differences

The Full-Platform Approach (Talend and Informatica)

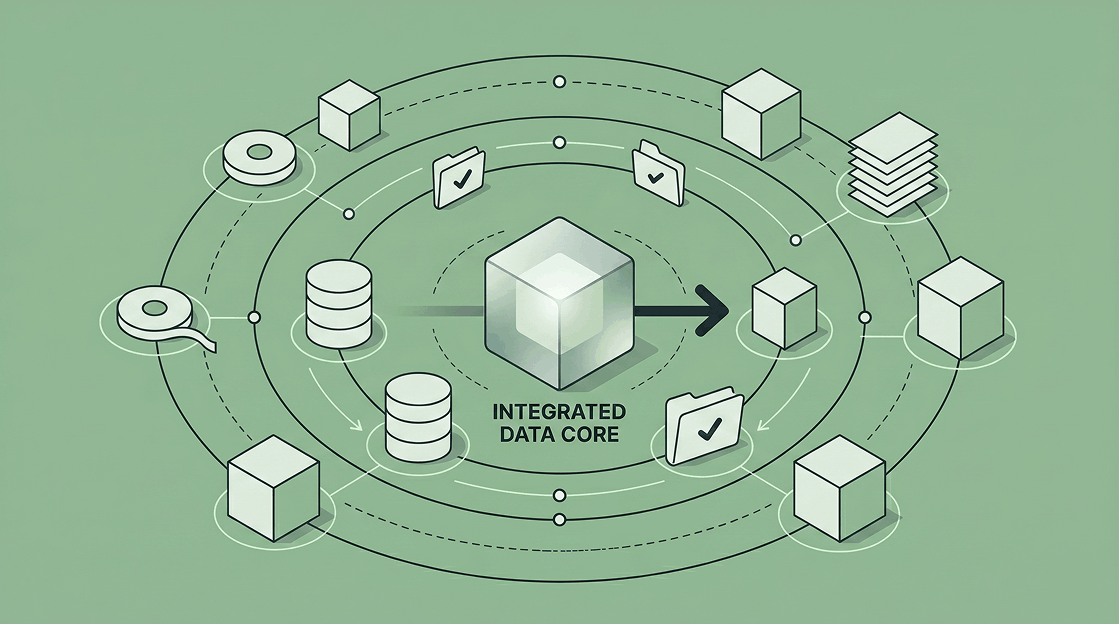

Both tools handle the complete data lifecycle: extraction, loading, transformation, quality checks, lineage, and governance, all within a single platform. The integration layer and the governance layer share the same metadata, the same pipeline context, and the same stewardship workflows.

- Primary benefit: One vendor for ingestion, quality, MDM, and governance. Audit trails and lineage are native, not bolted on.

- Primary limitation: Complexity and cost scale accordingly. Neither tool is self-service. Both require significant implementation investment, and pricing opacity is a consistent user complaint.

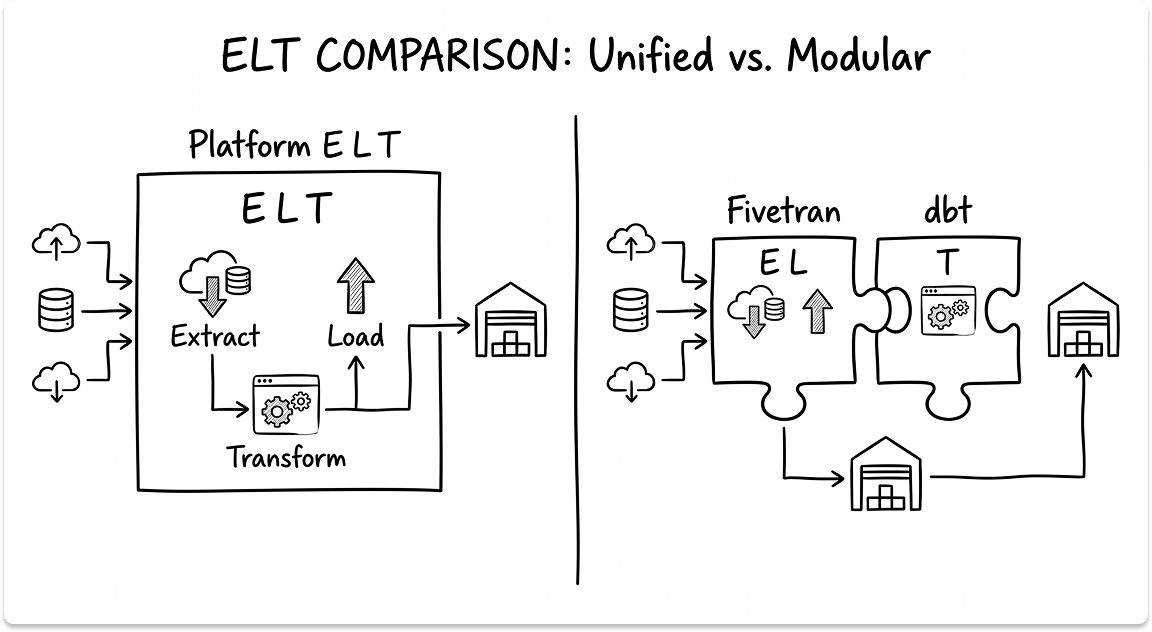

The Specialist Approach (Fivetran and dbt)

Fivetran and dbt each own one piece of the data pipeline with deliberate precision. Fivetran extracts and loads. dbt transforms. Neither tries to do the other's job.

- Primary benefit: Exceptional depth in their respective domains. Faster to deploy, easier to maintain, and open-source optionality with dbt Core.

- Primary limitation: You are assembling a stack, not buying a platform. Governance, quality, and lineage require additional tooling or manual effort.

Talend (Qlik Talend): Engineering Depth With Full-Platform Breadth

Architecture and Capabilities

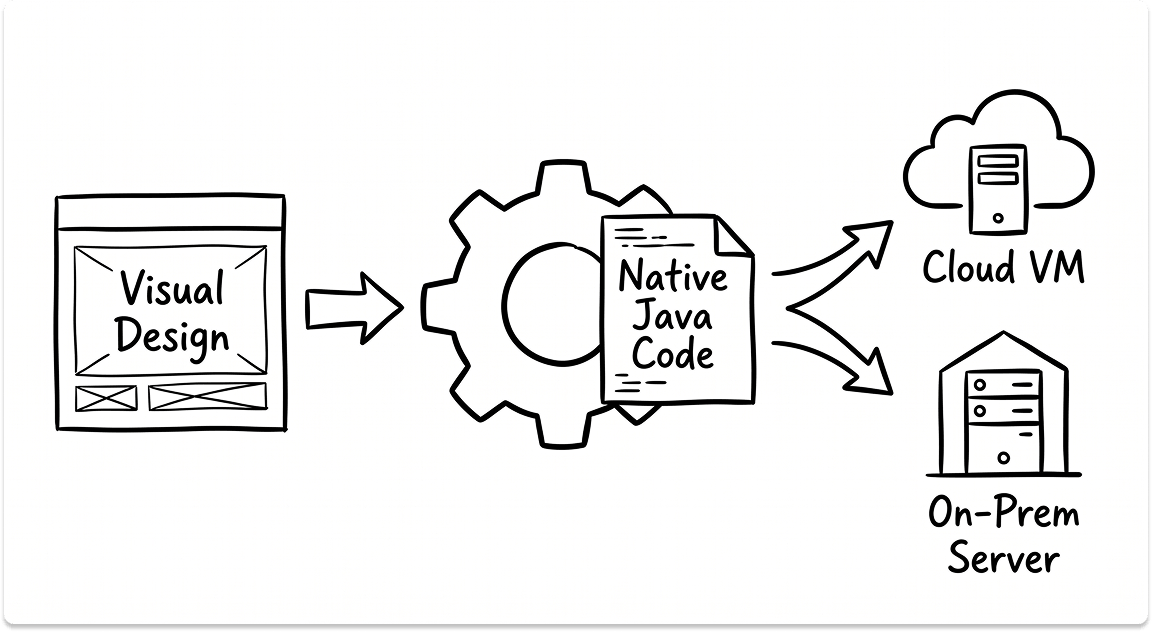

Talend's foundation is a code generator. You design pipelines visually in Talend Studio, built on Eclipse, and the tool generates native Java code from those designs.

That Java runs on any JVM-compatible platform: on-prem servers, cloud VMs, or local machines. This architecture means Talend functions as ETL (transformations run in its own engine) or ELT (execution pushed natively to the target warehouse), depending on configuration.

Key technical components:

- Jobs: the core execution unit, defining process flow and data flow between components

- tMap Component: handles expression mapping, multi-table lookups, routing, and joins in a single component

- Joblets: reusable task bundles (standardized error handling, notifications) deployable across multiple jobs

Engineers can inject custom Java directly into any component. The visual tooling has limits, and the escape hatch is always available when you need it.

Data Quality is native, not a separate module. Automatic profiling runs on semantic type recognition. The Qlik Talend Trust Score™ quantifies dataset readiness for AI and analytics workloads. Stewardship workflows route quality exceptions to business owners rather than dumping them back on the engineering team.

Connectivity spans 1,000+ connectors, the largest in this comparison. SAP, Mainframe, OpenAI, and Pinecone connectors are available at Enterprise tier. Apache Spark for high-throughput batch runs at Premium and above.

True on-premises deployment via Talend Data Fabric is a genuine differentiator. For environments with strict data residency requirements, government, regulated finance, and defense, this matters in ways that no other tool in this list can fully match.

Operational Reality: Pros and Cons

- Pros: 1,000+ connectors covering legacy and modern systems; native data quality with automated profiling; column-level lineage and data stewardship built in; genuine on-prem deployment; multi-modal in one platform (batch, CDC, ETL, ELT, API)

- Cons: Pricing model is opaque. Moving from Standard to Premium introduces execution and duration charges alongside volume, making cost forecasting genuinely difficult. Eclipse-based Studio is resource-heavy and slow on Mac. Git and CI/CD integration is manual and fragmented compared to modern toolchains. Open Studio discontinuation forces former free users into a paid migration.

Best Suited For: Regulated enterprises with hybrid or on-prem requirements, teams replacing legacy ETL platforms (IBM DataStage, older PowerCenter), and environments where data quality and stewardship need to be embedded inside the data pipeline, not managed separately.

Informatica (IDMC): The Enterprise Standard, Now Under Salesforce

Architecture and Capabilities

Informatica has been in data management since 1993. The current product is the Intelligent Data Management Cloud (IDMC), a cloud-native platform spanning ETL/ELT, data quality, Master Data Management (MDM), governance (AXON), catalog, and observability. For over 20 consecutive years, Gartner has placed it in the Leaders quadrant for Data Integration Tools.

The core deployment mechanism is the Secure Agent, a lightweight process running inside the customer's network. Data integration jobs execute in the customer's environment; Informatica's cloud handles orchestration and management.

This hybrid model is central to the enterprise pitch: your data stays in your environment.

The CLAIRE AI Engine runs throughout the platform, auto-classifying sensitive data, recommending transformations, predicting quality issues, and automating metadata discovery. Informatica supports ETL and ELT with pushdown execution to the warehouse when needed.

MDM is the capability nobody else in this comparison offers at comparable depth. It creates and maintains a single authoritative record for business-critical entities: customers, products, suppliers, and employees across all source systems. For an enterprise where the same customer exists under 14 different spellings across 12 different systems, MDM is not a nice-to-have.

AXON (Data Governance) is a full product within the platform: business glossaries, policy management, stewardship workflows, ownership assignment, and compliance tracking. 500+ connectors cover SAP, Salesforce, Workday, Oracle, legacy mainframes, and cloud platforms. Streaming is supported via Kafka-based Informatica Streaming.

The Salesforce Factor

Salesforce completed its acquisition of Informatica on November 18, 2025. INFA shares were delisted from NYSE. The evaluation picture has changed.

Salesforce's stated intent is to integrate Informatica's data catalog, governance, and MDM capabilities into Agentforce and Data Cloud. What that means practically:

- Salesforce-heavy shops gain a compelling integration angle. Tighter native connections between CRM data and enterprise data management are a plausible near-term outcome.

- Non-Salesforce shops carry increased roadmap risk. Salesforce will rationally prioritize features that serve its ecosystem. Capabilities that don't serve Salesforce Data Cloud may see slower development.

- Procurement dynamics change. Choosing Informatica now means betting on Salesforce's long-term commitment to the broader IDMC platform, not just the capabilities that make sense for Salesforce.

The core product capabilities remain intact. MDM, AXON, CLAIRE, and Secure Agent architecture are unchanged. But vendor risk is not the same as it was 12 months ago.

Operational Reality: Pros and Cons

- Pros: The only tool in this comparison with enterprise-grade MDM; full governance platform (AXON) native to the stack; CLAIRE AI for automated metadata and quality; Secure Agent hybrid architecture keeps data in your environment; 20+ years of Gartner Leadership

- Cons: Not self-service. Implementations routinely require weeks to months of professional services. IPU pricing is opaque and variable. PowerCenter-to-IDMC migration requires redesign, not a lift-and-shift. Highest total cost of ownership in this comparison. Roadmap now tied to Salesforce's priorities.

Best Suited For: Enterprises with hard MDM requirements, organizations already invested in the Salesforce ecosystem, regulated industries with complex governance obligations, and teams migrating from PowerCenter who want to stay within the same vendor lineage.

Fivetran: Automated ELT for the Modern Data Stack

Architecture and Capabilities

Fivetran's premise is focused: data pipelines should not require engineering attention after setup. The platform serves over 7,600 customers worldwide and guarantees 99.9% uptime across Standard, Enterprise, and Business Critical tiers.

The architecture separates cleanly into three layers: source systems, Fivetran's cloud (orchestration), and the customer's destination warehouse. Customer data never persists in Fivetran's environment.

Each sync spawns ephemeral workers that extract, normalize, and stage data, write to the destination, then expire. No persistent processes. No servers to maintain.

The incremental sync engine runs on Change Data Capture (CDC), reading directly from source database transaction logs with minimal source performance impact. Four CDC methods are supported: log-based, timestamp-based, trigger-based, and log-free. Idempotent design means failed syncs restart from the last known good state. No duplicates.

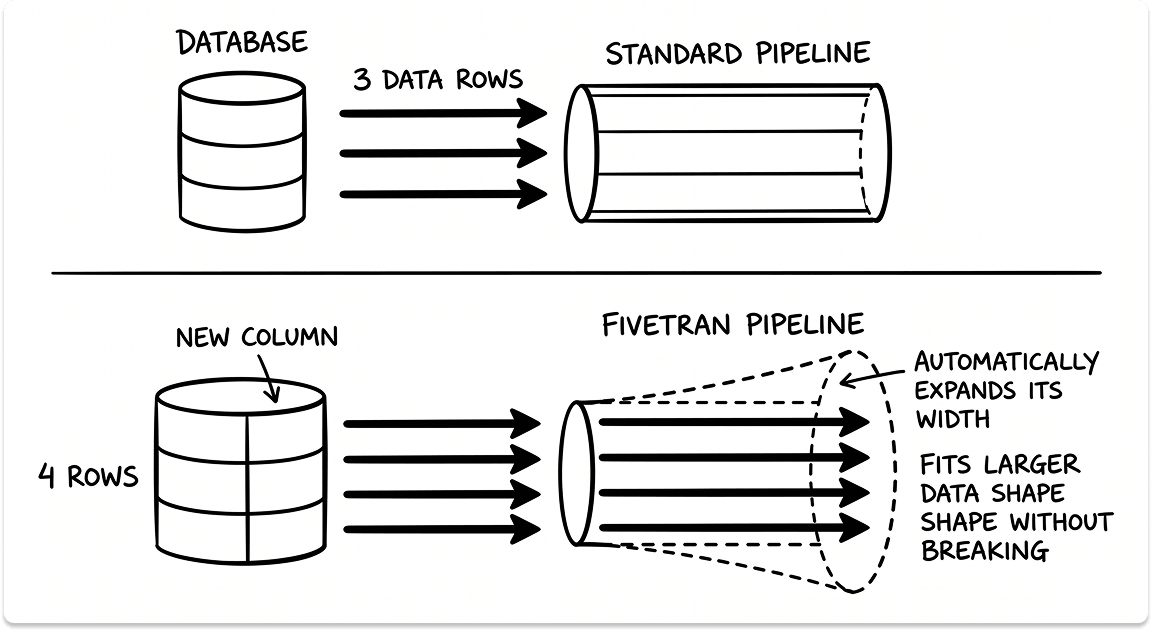

700+ fully managed connectors. SaaS source coverage, Salesforce, HubSpot, Stripe, NetSuite, Google Analytics, and Intercom, is best-in-class. When a source schema changes, Fivetran detects and propagates the change automatically. This eliminates an entire category of recurring pipeline maintenance.

Fivetran Activations, acquired via Census in May 2025, adds 200+ reverse ETL pipelines that sync enriched warehouse data back into CRMs, marketing platforms, and support tools. Audience Hub and AI Columns (LLM-generated fields via ChatGPT, Claude, and Gemini) are included.

Security is pre-certified: SOC 1, SOC 2, ISO 27001, PCI DSS Level 1, HIPAA BAA, and HITRUST. Column-level blocking and hashing prevent sensitive data from reaching destinations. No custom compliance configuration required.

Pricing uses a Monthly Active Rows (MAR) model. MAR counts distinct primary keys added, updated, or deleted per month. Initial and historical syncs are free; only ongoing changes count. Plans range from Free (500K MAR) to Business Critical (1-minute sync, customer-managed keys, GovCloud). Enterprise License Agreements provide unlimited consumption at a fixed annual price.

Operational Reality: Pros and Cons

- Pros: 10-minute connector setup; automated schema drift handling; zero-maintenance once running; built-in HIPAA and PCI DSS compliance; best SaaS source coverage in this comparison

- Cons: MAR pricing is unpredictable on high-churn tables. Backfills and bulk updates can spike costs dramatically with no real-time warning. No native data quality, lineage, or governance. Silent failures require SQL log queries to diagnose. Engineering escalation SLAs lack definition on non-enterprise tiers. The fully managed model limits customization for complex edge cases.

Best Suited For: Modern cloud-native stacks (Snowflake, BigQuery, Databricks) that need fast, reliable ingestion across many SaaS sources with minimal engineering overhead. Particularly strong for lean data teams building a modern data stack, and organizations where HIPAA or PCI compliance needs to be built in, not configured.

dbt: The Transformation Standard for Cloud Data Pipelines

Architecture and Capabilities

dbt Labs was founded in 2016. dbt Core has over 12,700 GitHub stars and a community of over 100,000 data professionals. And one thing it cannot do: move data. dbt is a T tool, not an ETL tool. Every deployment requires a separate EL layer, Fivetran, Airbyte, or custom pipelines. dbt's job starts after data lands in the warehouse.

The language is SQL SELECT statements plus Jinja templating, YAML configuration files, and ref() functions for declaring model dependencies. Write the business logic; dbt handles the DDL, including CREATE TABLE, INSERT, and schema management. Two engines are available:

dbt Core (Python, Apache 2.0, open-source): stateless, CLI-based, errors surface post-execution. Zero vendor dependency. Self-host with Airflow or Prefect. Many engineering teams do exactly this to avoid Cloud seat costs.

dbt Fusion (Rust, new engine), a complete rewrite with meaningful performance gains:

- Significantly faster parsing than dbt Core, per dbt Labs benchmarks

- Real-time validation as you type, with errors surfacing before execution

- Local execution that emulates Snowflake, BigQuery, and Databricks, no cloud compute consumed during development

- PII flow tracking through complex transformation chains for audit-ready compliance views

- State-aware orchestration (Enterprise) that only rebuilds models with refreshed upstream data, delivering 10–30% compute cost reduction

dbt Mesh addresses the enterprise scale problem. When a single project grows to hundreds of models across multiple teams, it becomes unmanageable. Mesh adds cross-project references, model contracts with enforced schema expectations between teams, versioning, and access controls.

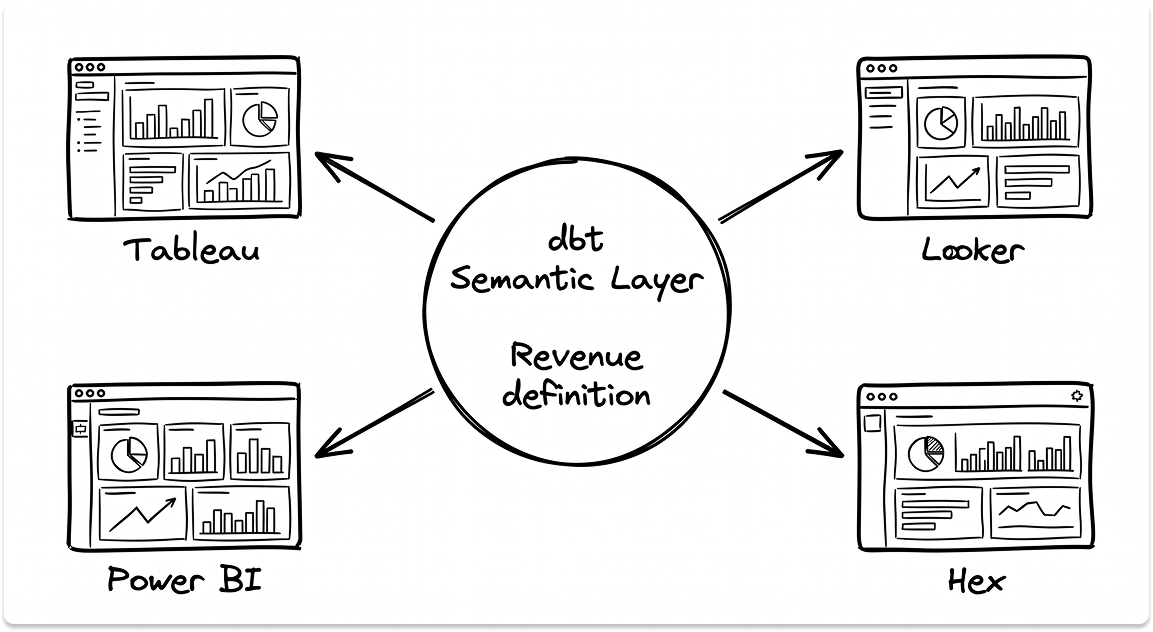

dbt Semantic Layer (powered by MetricFlow) solves metric inconsistency. Define revenue once. Tableau, Looker, Power BI, and Hex all read the same definition. The four different revenue numbers across four different dashboards problem goes away.

Testing runs natively: not_null, unique, accepted_values, referential integrity, and unit tests with mock inputs. Failures halt downstream jobs before bad data reaches dashboards.

Documentation compiles automatically from YAML metadata into a searchable site with full column-level lineage graphs. Everything lives in Git; every change is reviewable via PR.

Pricing uses seats plus Successful Models Built. Developer tier is free (1 seat, 3,000 models/month). Starter is $100/seat/month with 15,000 models included. Enterprise is custom annual pricing. dbt Core is free regardless.

Operational Reality: Pros and Cons

- Pros: SQL-first approach lets analysts do what previously required a data engineer; Git-native with CI/CD catching bad data before it hits production; auto-generated lineage and documentation as a byproduct of writing dbt code; Semantic Layer ending metric inconsistency; dbt Core is open source and fully portable

- Cons: No ingestion, full stop. Requires a separate EL tool. $100/seat/month compounds fast for large teams. Jinja and macro complexity has a steep learning curve for dynamic SQL. Not a full orchestrator; complex cross-system dependencies still need Airflow, Prefect, or Dagster alongside. Batch only, no streaming, no sub-minute data freshness.

Best Suited For: Engineering and analytics teams on modern cloud warehouses who want transformation logic that is tested, documented, version-controlled, and metric-consistent across BI tools. Pairs naturally with Fivetran for the EL layer, and the October 2025 merger makes that pairing a single vendor relationship.

Comparing all 4 Data Integration Tools

Answer the Questions Below and Find the Right Data Integration Tool

How to Choose

You need MDM and governance across a complex, multi-system environment. Informatica. Nothing else in this comparison comes close on MDM depth or governance breadth. Factor in the Salesforce acquisition. If you are already a Salesforce shop, this strengthens the case. If you are not, evaluate the roadmap risk honestly before signing a multi-year contract.

You have legacy on-prem systems, strict data residency requirements, or are replacing IBM DataStage or older PowerCenter. Talend. It is the only tool here with genuine full on-prem deployment and 1,000+ connectors covering legacy systems alongside modern cloud platforms. The pricing opacity is a real constraint — demand a detailed cost model before signing.

You are building a modern cloud data stack and need fast, reliable ingestion across many SaaS sources. Fivetran. Ten-minute connector setup, zero-maintenance schema management, and built-in compliance certifications make it the standard answer for Snowflake, BigQuery, and Databricks stacks. Understand your high-churn tables before you sign. MAR billing on event tables and frequently updated CRM records can produce surprises.

You need your transformation layer to be testable, documented, version-controlled, and metric-consistent. dbt. Pair it with Fivetran for EL. If you want zero vendor dependency, start with dbt Core and self-host. Move to dbt Cloud when the operational overhead of managing Core becomes the constraint. The October 2025 Fivetran merger makes the combined stack an increasingly cohesive choice.

You need ingestion plus transformation without building a full platform. Fivetran + dbt. This is the modern data stack answer for cloud data pipeline tools, and it now comes from a single merged entity. Add Airflow or Prefect for orchestration if your pipeline complexity demands it.

Evaluating Data Intergration and ETL Platforms?

If you're trying to understand vendor risk or are in between vendor evaluations, we can help. We'll understand your environment and priorities and match you with the vendors worth talking to. Plus a $50 gift card for your time.

FAQ

What is the difference between Talend, Informatica, Fivetran, and dbt?

Talend and Informatica are end-to-end data management platforms covering ingestion, transformation, data quality, MDM, and governance. Fivetran is an EL (extract and load) tool: it moves data from source systems into a destination warehouse, reliably and with minimal engineering overhead, but does not transform or govern it. dbt is a transformation-only tool: it runs SQL-based transformations inside the warehouse after data has already been loaded. They are not direct substitutes. Many teams combine Fivetran and dbt to cover ingestion and transformation, while Talend or Informatica serve organizations that need a single platform with governance and quality built in.

Is dbt an ETL tool?

No. dbt is a T tool, not an ETL tool. It handles the transformation step only, and only after data has already landed in a warehouse. It has no connectors, no extraction capability, and no ability to move data between systems. A separate EL layer, Fivetran, Airbyte, or a custom pipeline, is required before dbt has anything to work with. The common confusion comes from dbt's positioning within the modern data stack, where it typically sits downstream of a dedicated ingestion tool.

What does the Salesforce acquisition of Informatica mean for existing customers?

In the short term, the core IDMC product is unchanged. MDM, AXON governance, CLAIRE AI, and the Secure Agent architecture remain intact. The longer-term risk is roadmap alignment: Salesforce will rationally prioritize features that serve Salesforce Data Cloud and Agentforce. Organizations already running Salesforce have a credible integration story. Organizations that are not in the Salesforce ecosystem should evaluate whether Salesforce's product priorities will continue to serve their use case before committing to a multi-year contract.

When should I use Fivetran instead of Talend or Informatica?

Use Fivetran when your primary requirement is fast, reliable ingestion from SaaS and cloud sources into a modern cloud warehouse, and you do not need native data quality enforcement, master data management, or a built-in governance layer. Fivetran's 10-minute connector setup, automated schema drift handling, and pre-certified HIPAA and PCI DSS compliance make it the standard answer for lean data teams on Snowflake, BigQuery, or Databricks. If your requirements include MDM, column-level lineage at enterprise scale, on-premises deployment, or stewardship workflows, Talend or Informatica are the more appropriate choices.

What does the Fivetran and dbt Labs merger mean for data teams?

Both products continue to operate on independent roadmaps for now. The strategic direction is toward tighter EL-to-T integration: shared metadata, unified lineage, and a single vendor support relationship. For teams already using both tools, the merger simplifies procurement and creates a more coherent combined roadmap. dbt Core remains open source under Apache 2.0 regardless of how the merger evolves, so teams self-hosting dbt Core have no forced migration risk. The combined entity positions directly against Talend and Informatica for cloud-native data pipeline workloads.