The IT Vendor Scorecard: A Practical Guide and Template

A free IT vendor scorecard for CIOs and CISOs. Score vendors on security, SLAs, AI governance, and more. No signup. No download.

The quarterly vendor review ends. Scores are shared. The vendor nods, apologizes for the SLA breach, cites a "one-off infrastructure issue," and promises a corrective action plan by next week. That plan never arrives. Six months later, the same vendor is on your renewal list. Nobody can make a strong case to drop them. The scorecard gets filed. The contract gets signed.

That is not a vendor management failure. That is a scorecard design failure. The numbers existed. The accountability did not.

A vendor scorecard, used correctly, is a structured commitment between your team and your vendor on what good looks like, where the gaps are, and what happens if those gaps persist. The measurement is just the starting point. What matters is what the measurement forces.

Btw, if you're looking for IT vendors currently, we can help you find the right ones. Tell us your requirements and we will get back to you with a shortlist of vendors who fit. We'll also prep them before your conversation so it starts with context.

Why IT Vendor Management Is a Different Problem Entirely

Generic vendor scorecard guides are written for procurement managers evaluating physical goods suppliers. Delivery rate and defect count are the right metrics when you are buying raw materials. They are the wrong metrics when you are managing the software, infrastructure, and service providers that your entire operation runs on.

The IT vendor portfolio has three characteristics that make it categorically harder to manage.

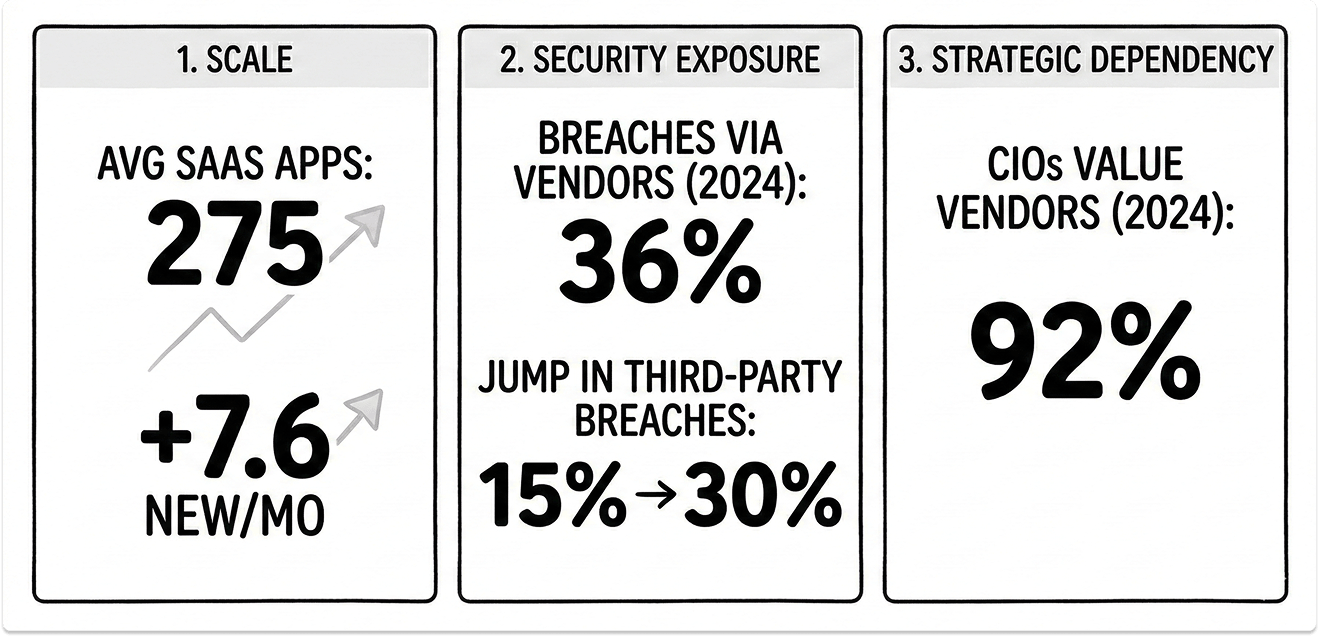

Scale. According to Zylo's 2025 SaaS Management Index, the average company manages 275 SaaS applications, with 7.6 new applications entering the environment every month. No team has the bandwidth to maintain a meaningful scorecard across 275 relationships. Prioritization is not optional. It is the first decision you have to make.

Security exposure. Your vendor portfolio and your threat surface are the same thing. According to SecurityScorecard, at least 36% of all data breaches in 2024 originated from third-party compromises, up 6.5% year-over-year. Verizon's 2025 DBIR found that third-party breaches leapt from 15% to 30% of all incidents in a single year. One in three breaches now walks through a vendor's door. A CISO who scores vendors only on uptime and cost is measuring the wrong things.

Strategic dependency. 92% of CIOs believe vendors play a valuable role in their company's overall success. When that is true, managing those relationships on gut instinct and annual reviews is an organizational risk that no board should tolerate.

The Consolidation Wave Makes This More Urgent, Not Less

The instinct many IT leaders have is that vendor consolidation reduces management overhead. Fewer vendors, less complexity, less need for rigorous scoring. The data says the opposite.

A CIO Tech Talk community poll found that 95% of senior IT executives at mid-sized to large organizations are planning to consolidate vendors in the next 12 months. Critically, 80% cited architectural simplification as the primary driver, not cost-cutting. This is about reducing point solutions and integration debt, not just invoices.

ADAPT's CIO Edge research, covering more than 140 CIOs, found that a majority of organizations are targeting a 20% reduction in vendor count. The same research found that 90% of IT professionals identify software consolidation as a priority, while 73% expect software investment to continue growing simultaneously.

Here is the logic inversion that matters: when you concentrate 80% of your IT spend into 20% of your vendors, those remaining relationships become load-bearing. A single underperforming strategic vendor now carries more operational risk than a dozen marginal ones did before. The argument for scorecard rigor gets stronger precisely when vendor count goes down, not weaker.

What a Well-Designed IT Vendor Scorecard Actually Measures

A vendor evaluation scorecard built for IT leaders needs six distinct scoring dimensions. Four of them overlap with general vendor management. Two of them are specific to IT and are absent from every generic scorecard template you will find online.

1. Security and Compliance Posture

For a CISO, this is not one metric among six. It is the most heavily weighted dimension. The indicators that matter are: current certifications (SOC 2 Type II, ISO 27001, FedRAMP where applicable), incident response track record, breach notification turnaround time, and whether an independent security audit has occurred in the last 12 months.

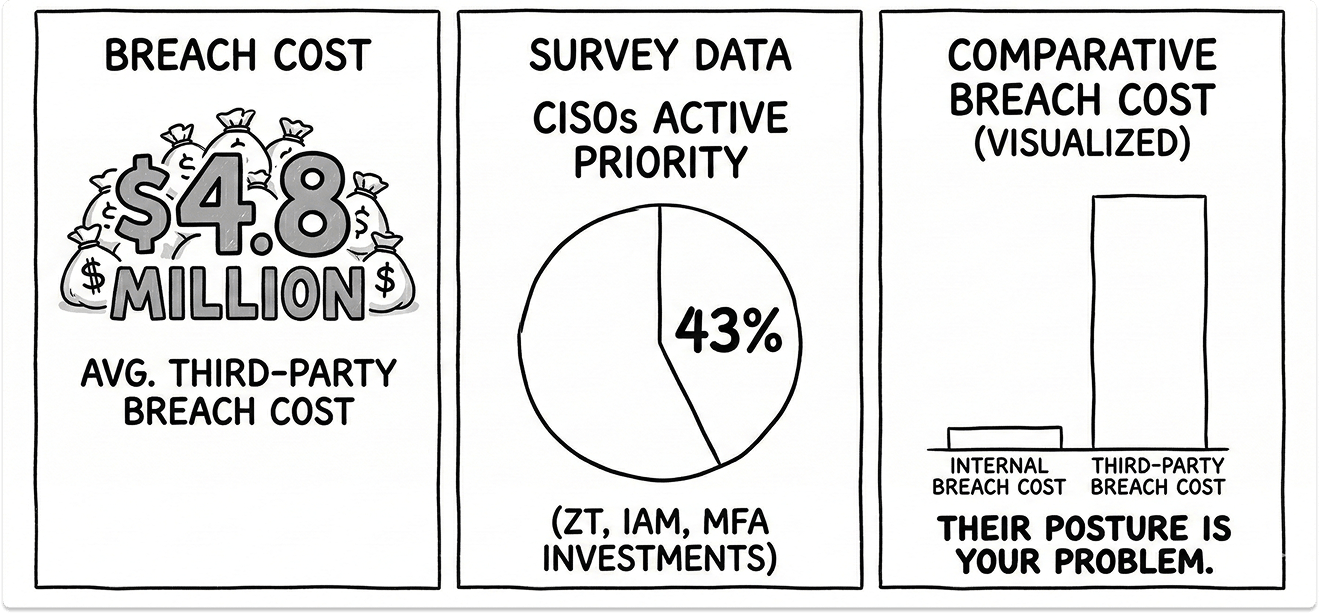

Gartner's 2025 CISO Leadership Perspectives survey of over 1,100 CISOs found that 43% are actively investing in Zero Trust, Identity and Access Management, and Multi-Factor Authentication. A vendor who cannot support or integrate with those architectures is not just inconvenient. They are a structural gap in your security posture.

When a breach originates from a third-party system, the average remediation cost reaches $4.8 million, higher than breaches caused by internal systems alone. A vendor's security posture is not their problem. It is yours.

2. SLA Adherence and Incident Response Quality

73% of organizations experienced an outage costing over $100,000 in the last year. Tracking whether a vendor met their SLA percentage is less useful than tracking how they behaved when they did not.

Score on three factors: proactive communication during an incident, time to root cause identification, and whether a post-incident review was delivered within an agreed window. A vendor who hits 99.9% uptime but goes silent during the 0.1% is more dangerous than one who misses slightly and communicates clearly throughout.

3. Integration and Interoperability Performance

This dimension does not exist in generic scorecards. It should be the third-highest weighted category for any IT leader managing a multi-vendor environment.

Every hour your engineers spend building, patching, or maintaining integrations with a vendor's API is an unaccounted cost that never appears on that vendor's invoice. Score vendors on API reliability and version stability, documentation quality, sandbox environment availability, and responsiveness to integration support requests. If a vendor's API breaks on a minor update and their support team takes 72 hours to respond, that cost is invisible in your standard vendor reporting but very visible to your engineering team.

4. AI Governance and Data Handling Transparency

This is the newest scoring dimension and the one most IT leaders are not yet measuring formally.

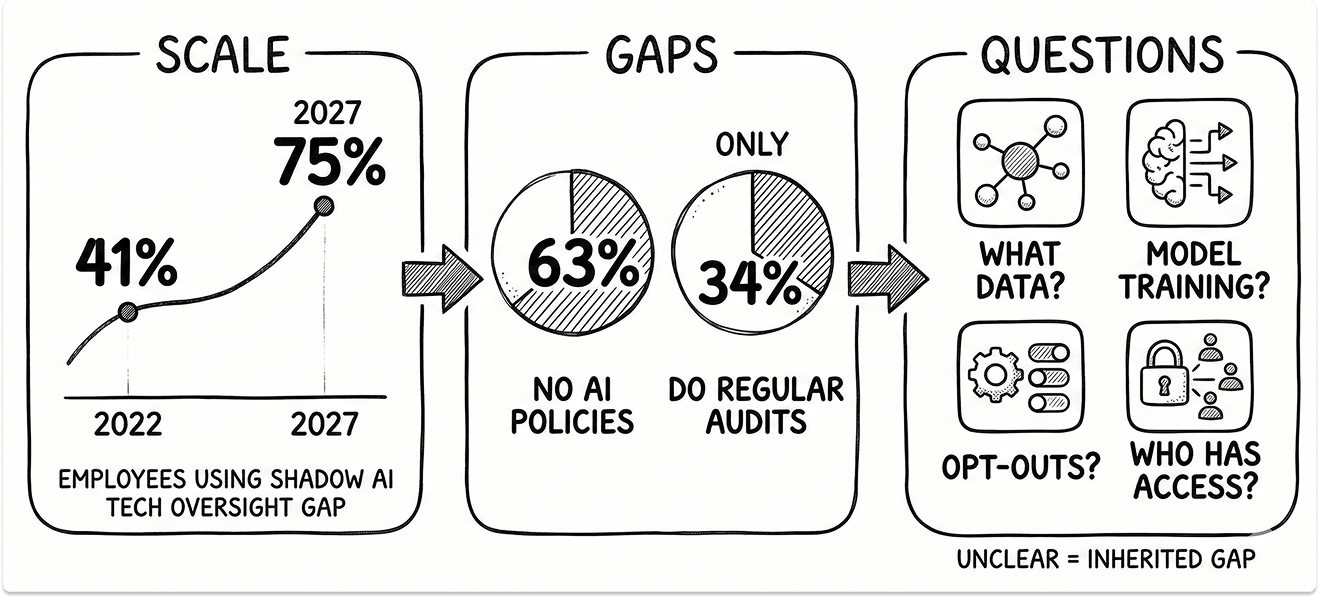

Gartner projects that 75% of employees will acquire, modify, or create technology without IT oversight by 2027, up from 41% in 2022. Shadow AI is already a material risk. 63% of organizations currently lack formal AI governance policies, and only 34% conduct regular audits to detect unauthorized AI usage.

Every vendor in your portfolio who has added an AI feature to their product in the last 18 months needs to answer four questions: What data does the AI feature process? Does the vendor use customer data to train their models? What opt-out mechanisms exist? Who within the vendor organization has access to AI-generated outputs based on your data? If a vendor cannot answer these questions clearly, that is a governance gap you are inheriting.

5. Cost Predictability and Total Cost of Ownership

Not price. Cost predictability. A vendor who charges 15% more but invoices consistently within agreed parameters is a better financial partner than one who charges less but generates three unplanned change orders per quarter.

Track unit cost trends over rolling 12-month periods, frequency and causes of out-of-scope billing, and benchmark against at least one alternative in the same category annually. Total cost of ownership must include integration maintenance hours, security review time, and support ticket volume, not just the invoice total.

6. Strategic Alignment and Roadmap Confidence

Score vendors on whether their product direction aligns with where your IT strategy is going in the next 18 to 24 months. This requires an annual roadmap review, not just a performance review.

A vendor who is excellent today but pivoting away from your use case, being acquired by a competitor, or sunsetting the product tier you depend on, is a strategic risk that cost and uptime metrics will never surface. Ask directly: what does your roadmap for our product category look like in 24 months? The clarity and specificity of the answer is itself part of the score.

The Free IT Vendor Scorecard and Template

Use the scorecard below to evaluate any current vendor across all six dimensions. No download. No signup. Adjust the category weights to reflect your organization's priorities before scoring.

The Four Reasons Vendor Scorecards Fail in Practice

Failure 1: Measuring what is easy instead of what is important

Teams default to metrics that come out of the ERP or ITSM system automatically. On-time delivery, ticket volume, invoice accuracy. Meanwhile, security posture, integration reliability, and roadmap alignment go unscored because they require judgment and manual input. The result is a scorecard that tells you everything about the easy stuff and nothing about the things that actually determine whether a vendor relationship is an asset or a liability.

Failure 2: The political immunity of strategic vendors

When a vendor is sole-sourced, deeply integrated, or responsible for a critical system, scoring them poorly is functionally meaningless if your organization is not prepared to act on it. Over time, this trains everyone involved to treat the scorecard as a compliance exercise. The review happens. The scores are filed. The contract renews. I have seen this pattern repeat across organizations of every size. The scorecard did not fail. The accountability structure did.

Failure 3: Building the scorecard without vendor input

A vendor who receives a scorecard they had no part in designing will optimize for the grade, not the underlying goal. This is rational behavior, not bad faith. If the on-time delivery metric does not account for the fact that your procurement team regularly submits orders with incomplete specifications, the vendor will learn to push back on incomplete orders rather than fix the actual friction. Co-designing the scorecard with your strategic vendors takes one additional meeting. It produces dramatically better data and dramatically better behavior.

Failure 4: No action cadence, only a reporting cadence

A quarterly review with no corresponding decision framework is just a meeting. Define in advance what each score threshold means: what triggers an improvement plan, what triggers a sourcing review, what qualifies a vendor for preferred status. Vendors who understand the consequences of their scores manage toward them. Vendors who see scores with no attached outcomes ignore them.

Connecting Scorecard Results to Real Vendor Decisions

A vendor scorecard template that does not feed into contract terms, renewal decisions, or order allocation is a measurement exercise with no management layer. Here is a framework that makes scores consequential.

Preferred status (score above 80/100): First consideration for expanded scope, faster payment terms, and multi-year contract options. This is the incentive tier. High-performing vendors should feel the commercial benefit of their performance.

Watch status (score between 60 and 80): Formal improvement plan required within 30 days of the review. Specific targets, specific timelines, named owner on both sides. The next review is scheduled at 60 days, not 90.

Sourcing review triggered (score below 60): Begin evaluating alternatives, even if you do not immediately switch. The vendor should know this is happening. The act of beginning a sourcing review, communicated transparently, changes vendor behavior more reliably than any improvement plan conversation.

Embedding these thresholds into vendor contracts is not adversarial. It is honest. Vendors who understand what their scores mean for the relationship have a clear map of what they need to do to protect and grow it.

The Actual Purpose of an IT Vendor Scorecard

I have been in rooms where a vendor presented a beautifully formatted response to a poor scorecard. Apologetic. Detailed. Persuasive. And entirely disconnected from any structural change. The score was 58. The contract was renewed. Two quarters later, the same issues resurfaced.

The scorecard was not the problem but the absence of a pre-agreed consequence was.

A vendor scorecard for IT leaders is, at its core, a decision-making infrastructure. It turns renewal conversations from negotiation theater into evidence-based reviews. It turns budget justifications from gut instinct into defensible data. It turns security risk management from periodic audits into a continuously scored posture across your entire vendor portfolio.

With 95% of senior IT executives planning to consolidate vendors, the organizations that will execute that consolidation well are the ones who already know, with data, which vendors are genuinely earning their place in the stack. The rest will make those decisions the way they always have: based on who complains the loudest, who has the most switching friction, and who submitted their renewal paperwork first.

Your vendor portfolio is a strategic asset or it is a liability you have not yet quantified. A well-built vendor scorecard is how you tell the difference.

Looking for IT partners?

Find your next IT partner on a curated marketplace of vetted vendors and save weeks of research. Your info stays anonymous until you choose to talk to them so you can avoid cold outreach. Always free to you.

FAQ

What is an IT vendor scorecard?

A structured tool that rates technology vendors across IT-specific dimensions — security posture, SLA quality, integration reliability, AI governance, cost predictability, and roadmap alignment — and ties those scores to defined contract outcomes.

How do you calculate vendor scorecard scores?

Rate each category 1–5, weight each by strategic importance, normalize to a 100-point scale. The weight you assign each category is a strategic declaration, not a technical detail.

Why do vendor scorecards fail?

Four reasons: teams measure what's easy rather than what matters, strategic vendors enjoy political immunity, vendors weren't involved in designing the criteria, and reviews have no action cadence attached.

What categories should an IT vendor scorecard include?

Security and compliance, SLA and incident response, integration performance, AI governance, cost predictability, and strategic roadmap alignment. Weight the first two highest.

How often should you review vendor scorecards?

By risk and performance, not by calendar. Underperforming critical vendors: monthly. Stable strategic vendors: twice a year. High-performing, low-risk vendors: annually.