EKS vs AKS vs GKE: The IT Leader's Guide to Choosing a Managed Kubernetes Service

EKS vs AKS vs GKE: a direct comparison of pricing, SLAs, compliance coverage, and AI/ML fit for IT leaders choosing a managed Kubernetes service in 2026.

Choosing between Amazon EKS, Azure Kubernetes Service, and Google Kubernetes Engine is one of the most consequential infrastructure decisions an IT leader will make this decade.

These three managed Kubernetes platforms now run the majority of enterprise container orchestration workloads globally, but they were built with different organizations in mind, for different problems, with different cost structures underneath.

This EKS vs AKS vs GKE guide cuts through the engineering-level feature comparisons and gives you what you actually need: a strategic framework for making the right call for your organization.

What "Managed Kubernetes" Actually Means for Your Organization

There is a persistent misconception in vendor conversations that "fully managed" means your team is off the hook. It doesn't.

Every managed Kubernetes service in this comparison handles the control plane: the API server, etcd, the scheduler, and the controller manager. That is the baseline. What changes between platforms is how much of the surrounding operational model gets automated and how much stays with your engineers.

Kubernetes orchestration at enterprise scale involves far more than keeping the control plane healthy. Version upgrade planning, identity and access model design, network policy enforcement, cost governance, and compliance configuration all remain your team's responsibility regardless of which platform you choose.

The three flagship "hands-off" modes, EKS Auto Mode, AKS Automatic, and GKE Autopilot, push the automation ceiling higher than ever, but they do not eliminate operational ownership. They redistribute it.

Understanding that distinction upfront will save you from a very expensive misalignment between what procurement thinks you're buying and what your engineering team actually inherits.

Amazon EKS, Azure AKS, and Google GKE: Strategic Profiles for IT Leaders

Before comparing features, it helps to understand what each platform was fundamentally built to do. Each one has a clear primary constituency, and the further your organization sits from that constituency, the more friction you will encounter.

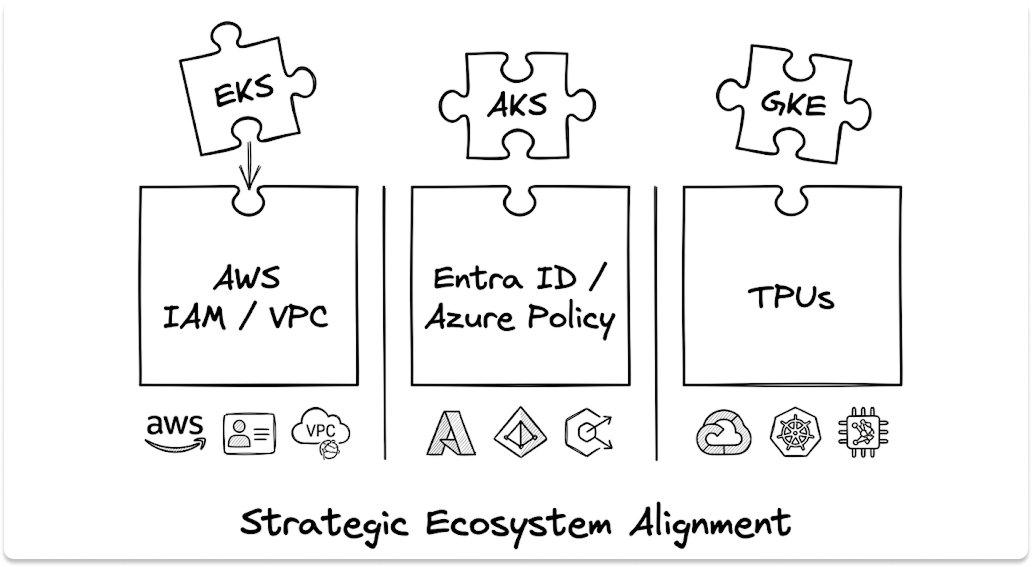

Amazon EKS (Elastic Kubernetes Service): Built for AWS-Native Scale

Amazon EKS is the managed Kubernetes service for organizations running deep into the AWS ecosystem. Its competitive advantage is not any single feature; it is the depth of integration with AWS infrastructure your team already manages.

IAM, VPC, CloudWatch, GuardDuty, ECR, and over 200 AWS services connect to EKS natively, without third-party tooling or translation layers.

EKS Auto Mode is AWS's most aggressive automation offering to date. With a single configuration change, it fully automates compute provisioning, storage, networking, and node lifecycle management.

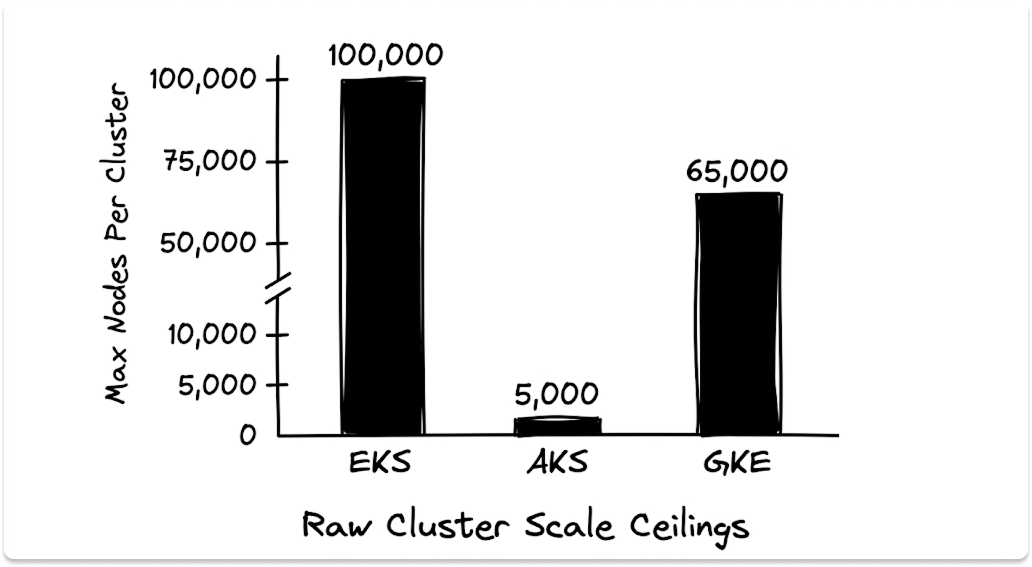

For AI and ML workloads specifically, AWS EKS supports clusters of up to 100,000 worker nodes and can manage up to 1.6 million Trainium chips or 800,000 NVIDIA GPUs within a single cluster. That is a scale ceiling no other Kubernetes service in this comparison matches for raw compute.

The customer results speak to what EKS delivers at scale. Riot Games cut $10 million in annual infrastructure costs after migrating to Amazon EKS. Sony Interactive Entertainment achieved 60% lower operational costs and 5x faster deployments. Jaguar Land Rover accelerated pipeline build times by 95%.

Azure AKS (Azure Kubernetes Service): Built for the Microsoft Ecosystem

Azure AKS is the managed Kubernetes platform for organizations running Microsoft infrastructure. Its deepest differentiator is identity: Azure Kubernetes Service authenticates through Microsoft Entra ID, which means your cluster access model is built directly on the same identity system your organization uses for Microsoft 365, Azure DevOps, and every other Microsoft service. There is no separate credentials layer to build and maintain.

Governance follows the same pattern. Azure Policy applies compliance guardrails directly to AKS clusters, using the same policy framework your Azure environment already enforces. For organizations that have invested in Azure governance, this integration is genuinely valuable rather than a marketing claim.

On compliance, Azure AKS carries the broadest federal coverage of the three platforms. Through Azure Government, it holds FedRAMP High authorization and supports DoD IL2, IL4, IL5, and IL6 workloads.

No other Kubernetes service in this comparison matches that depth for US federal and defense requirements. Microsoft was also named a Leader in the 2025 Gartner Magic Quadrant for Container Management, a signal worth referencing in board-level vendor evaluations.

Google GKE (Google Kubernetes Engine): Built by the Creators of Kubernetes

Google GKE occupies a unique position: Google created Kubernetes and donated it to the CNCF in 2014. That heritage means Google Kubernetes Engine consistently runs the most upstream implementation of Kubernetes, typically first to support new features and tightest in alignment with the open-source project.

GKE's strongest current differentiation is in AI workload economics. Google claims Google GKE inference capabilities reduce serving costs by over 30% compared to other managed offerings, cut tail latency by 60%, and increase throughput by up to 40% through gen AI-aware scaling.

For organizations running AI models on Google's Tensor Processing Units, GKE is the only option in this comparison. TPUs are exclusive to Google Cloud; there is no equivalent on EKS or AKS.

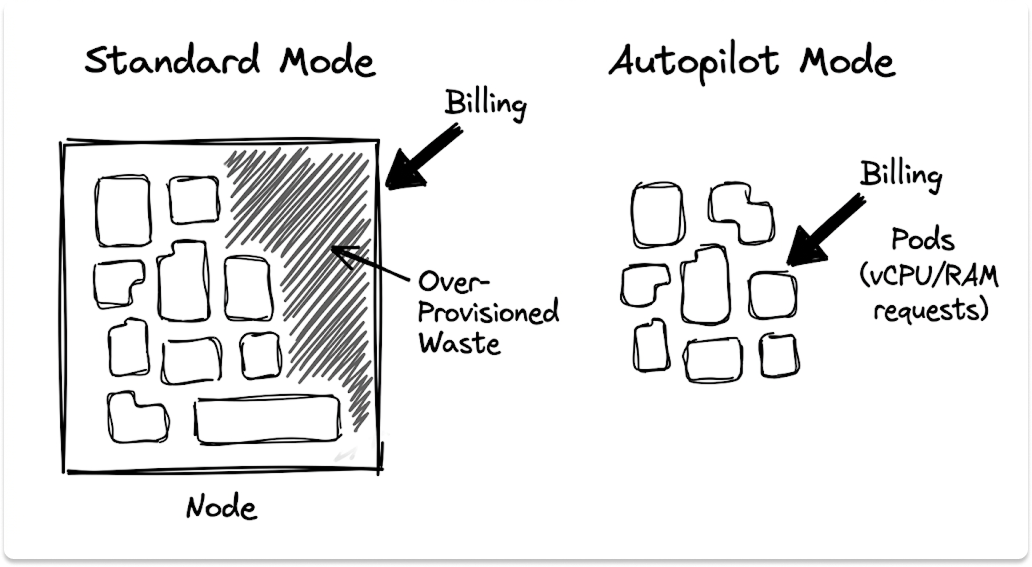

GKE Autopilot also introduces a structurally different cost model. Where EKS and AKS charge based on the nodes you provision, Autopilot charges based on the CPU, memory, and storage your pods actually request.

That shift eliminates node-level over-provisioning as a cost driver, which matters considerably for organizations running variable or bursty workloads.

EKS vs AKS vs GKE: Full Platform Comparison for Enterprise Decision-Makers

Kubernetes Orchestration Capabilities: How Each Platform Manages Workloads at Scale

Container orchestration at enterprise scale requires more than keeping pods running. It requires intelligent autoscaling, predictable upgrade behavior, and operational visibility across clusters, regions, and teams.

Amazon EKS uses Managed Node Groups for node lifecycle management and Auto Mode for full infrastructure automation. Cluster autoscaling integrates with EC2 Spot Instances, which can reduce node costs by up to 90% for fault-tolerant workloads. The Kubernetes orchestration model on EKS is powerful but requires AWS-fluent engineers to configure correctly, particularly around IAM role design, VPC CNI networking, and CloudWatch observability setup.

Azure AKS supports the Kubernetes Event Driven Autoscaler (KEDA), the cluster autoscaler, and horizontal pod autoscaling natively. AKS Automatic handles node provisioning, scaling, and upgrades without manual intervention. The platform also supports virtual nodes that burst into Azure Container Instances when demand spikes, giving teams a cost-effective overflow layer without pre-provisioning capacity.

Google GKE goes furthest on autoscaling sophistication, supporting Horizontal Pod Autoscaling, Vertical Pod Autoscaling, and Multidimensional Pod Autoscaling simultaneously. The release channel model (Rapid, Regular, Stable, Extended) gives IT leaders a governed way to balance upgrade freshness against stability risk, a more formalized upgrade governance mechanism than either EKS or AKS provides.

SLA and Availability: What Each Managed Kubernetes Service Actually Guarantees

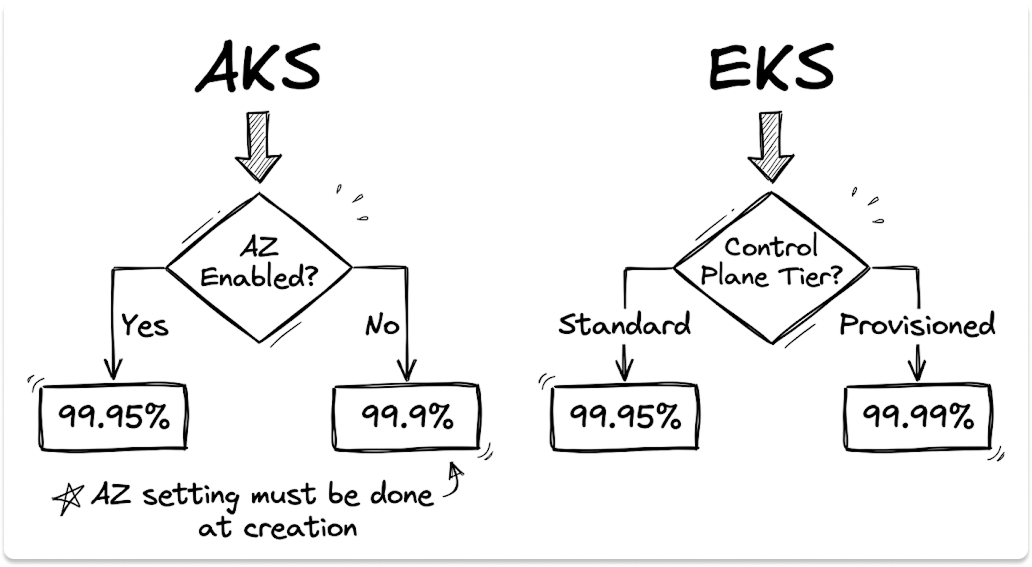

The SLA headlines look similar across all three platforms at 99.95%, but the fine print carries real differences that matter at the contract level.

Amazon EKS offers 99.95% on the Standard Control Plane and 99.99% on the Provisioned Control Plane, which carries an additional cost starting at $1.65/cluster/hour for the XL tier. The 99.99% SLA is not the default; you pay to access it. EKS also has the most favorable credit structure: downtime below 95% triggers a 100% service credit.

Azure AKS delivers 99.95% only when Availability Zones are explicitly enabled at cluster creation time. Clusters without AZs are backed by 99.9%. The AZ configuration cannot be added to a running cluster, so this is an architecture decision that must be made before deployment, not after. The Free tier carries no financial SLA commitment at all. Additionally, AKS splits availability coverage across two separate SLAs: the API server SLA and a separate Azure Virtual Machines SLA for agent nodes.

Google GKE offers 99.95% for Regional and Autopilot clusters, and 99.5% for Zonal clusters. The maximum financial credit for extended outages is capped at 50% of the monthly bill, lower than EKS's 100% threshold. GKE also defines downtime as five or more consecutive minutes of complete connectivity loss, meaning brief disruptions under five minutes do not qualify for credits regardless of their operational impact.

Total Cost of Ownership: What EKS, AKS, and Google GKE Actually Cost at Enterprise Scale

Here is a fact that every vendor glosses over: the $0.10/cluster/hour base fee is identical across Amazon EKS, Azure AKS Standard tier, and Google GKE. At that price point, they look like commodities. They are not.

The real cost sits in the layers underneath. Compute nodes, persistent storage, cross-AZ egress traffic, load balancer IP addresses, observability tooling, managed add-ons, and support tiers all accrue separately on every platform.

At a mid-size enterprise footprint of 20 clusters across two regions, your true monthly infrastructure cost will typically run 10 to 20 times the cluster management fee before a single application workload runs.

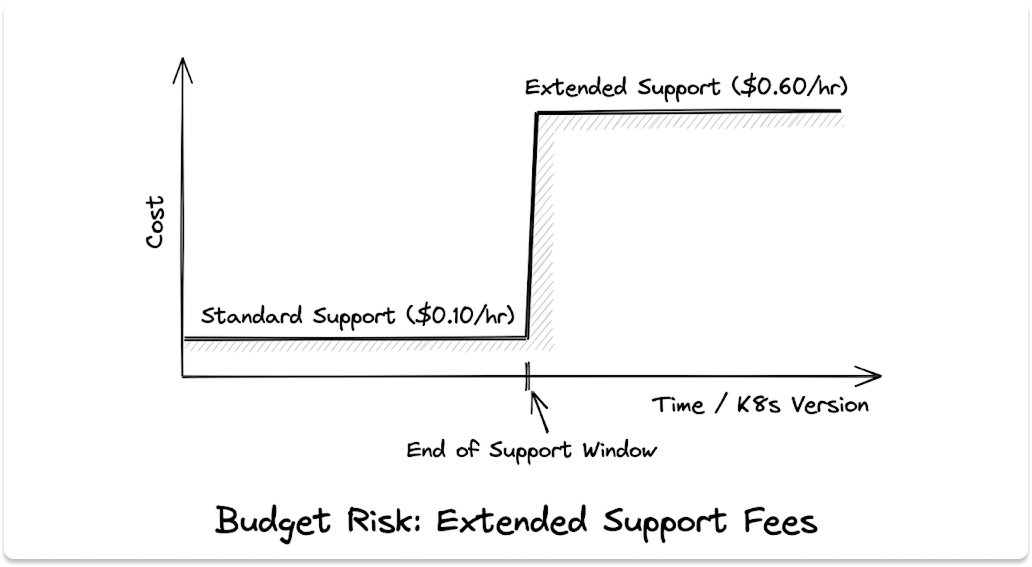

The single most dangerous hidden cost across all three platforms is extended Kubernetes version support. If your team misses an upgrade cycle and runs a cluster on a version past its standard support window, the per-cluster fee jumps from $0.10 to $0.60 per hour on all three platforms.

That is a 6x increase. At 20 clusters running on extended support for 12 months, that is an unplanned $84,000 per year. Version upgrade planning is not an engineering nicety; it is a budget risk.

EKS has the most complex billing structure of the three. You are managing cluster fees, EC2 node instance costs, EBS storage volumes, cross-AZ network traffic, CloudWatch ingestion, Auto Mode per-instance management surcharges, and individual add-on fees for capabilities like Argo CD and ACK. Without mature FinOps practices and proper cost allocation tagging, EKS bills are difficult to forecast and easy to misattribute.

AKS offers the most accessible entry point with a free control plane tier, but the cost math changes considerably when you add AKS Automatic. The per-vCPU management surcharge runs from $7.05/vCPU/month for general-purpose nodes to $32.29/vCPU/month for GPU-accelerated compute. A cluster running 50 GPU vCPUs adds $1,614.50/month in management fees alone, on top of the underlying VM costs.

GKE's Autopilot model is the most structurally efficient for variable workloads because you pay for pod resource requests rather than provisioned nodes. The trade-off is that cost optimization shifts from node right-sizing to pod spec accuracy. Teams that set loose or inaccurate resource requests in their pod specifications will see the same billing waste that Autopilot was designed to eliminate.

Vendor Lock-in Risk: What IT Leaders Need to Know Before Choosing a Kubernetes Service

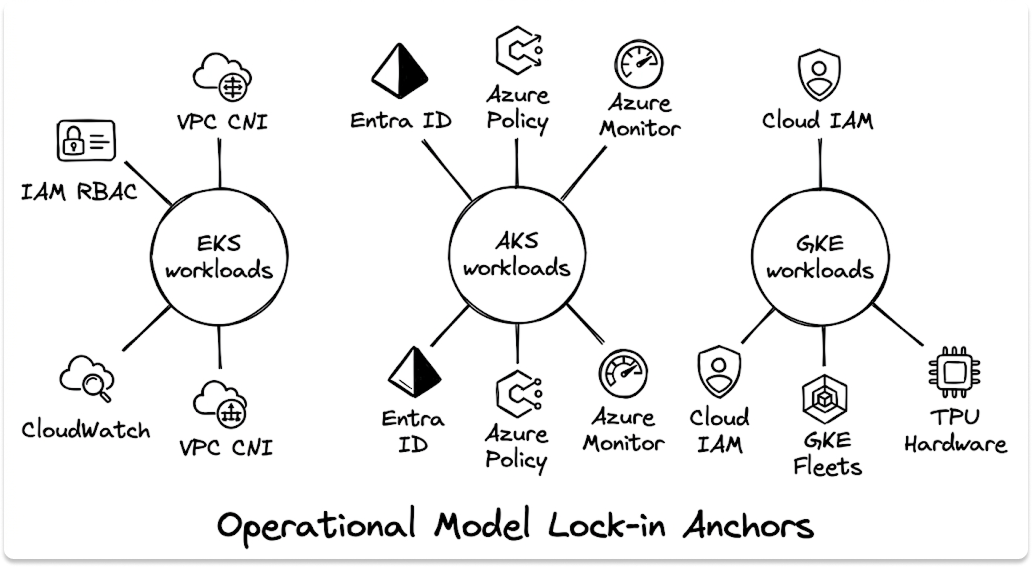

Every managed Kubernetes service in this comparison runs certified, CNCF-conformant Kubernetes. Your application workloads, the deployments, services, ConfigMaps, and Helm charts, are portable. Your operational model is not.

This distinction matters enormously when evaluating long-term platform risk. Migrating container workloads between platforms is a realistic project. Migrating the identity architecture, governance framework, observability stack, and networking model that surrounds those workloads is a multi-quarter re-engineering effort.

Amazon EKS binds your operational model to IAM-integrated RBAC, VPC CNI networking, CloudWatch observability, and AWS-native security tooling. Organizations that adopt AWS Controllers for Kubernetes (ACK) to manage AWS services from Kubernetes manifests add another layer of proprietary dependency. The deeper the AWS integration, the higher the migration cost of any future platform change.

Azure AKS binds your operational model to Microsoft Entra ID authentication, Azure Policy governance, Azure Monitor observability, and Azure Arc hybrid management. For Microsoft-first organizations, this is not a risk; it is the point. For organizations pursuing multi-cloud portability, the identity re-architecture cost of migrating away from Entra ID-based RBAC is substantial.

Google GKE binds your operational model to Google Cloud IAM, Workload Identity Federation, GKE Fleets multi-cluster management, and Google Cloud Observability. Teams running TPU-based AI workloads carry the highest migration risk of the three: Google's Tensor Processing Units have no equivalent on EKS or AKS, meaning any platform migration requires rebuilding the AI inference infrastructure from scratch.

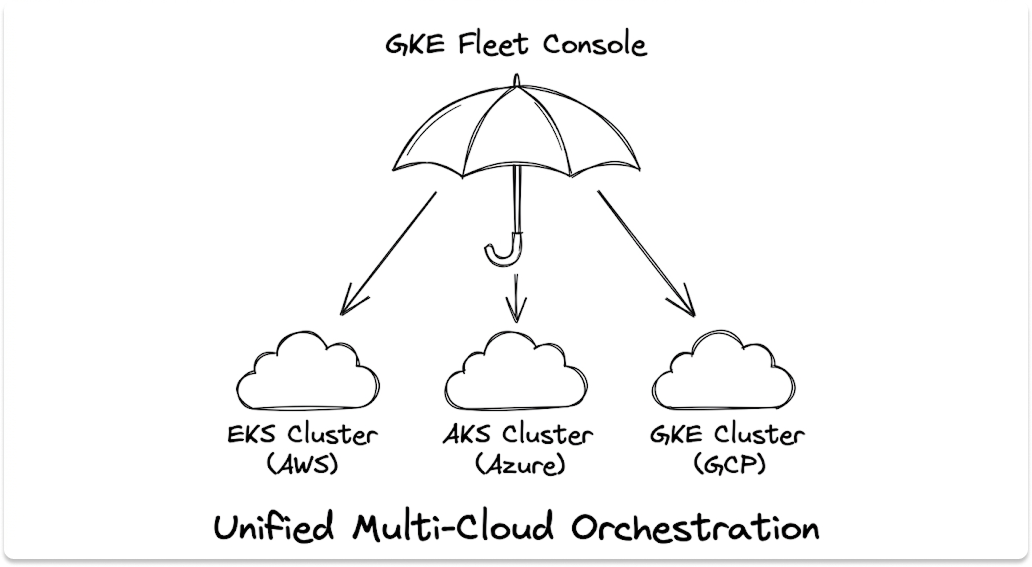

Where GKE does offer a genuine open-standards advantage is in its Attached Clusters capability. Google Kubernetes Engine can govern EKS and AKS clusters from a single GKE Fleet console.

No other platform in this comparison offers cross-cloud Kubernetes management in the same way, making GKE the strongest choice for organizations that want unified multi-cloud Kubernetes orchestration without standardizing on a single cloud provider for all workloads.

Compliance and Security: How Amazon EKS, Azure AKS, and Google Kubernetes Engine Handle Regulated Workloads

Compliance Framework Coverage: EKS vs AKS vs GKE by Industry

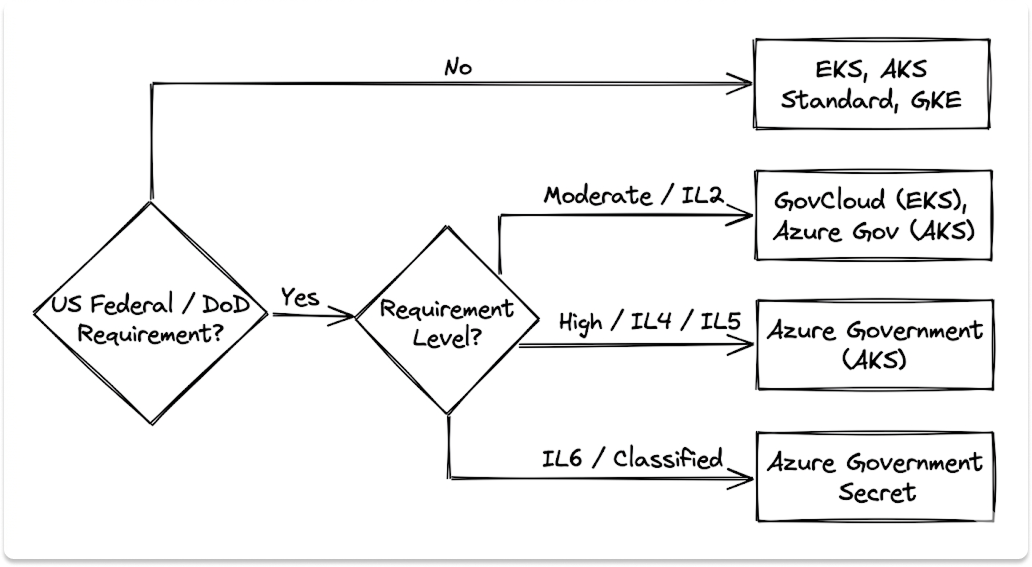

For US federal and defense organizations with FedRAMP High or DoD IL4/IL5/IL6 requirements, Azure AKS on Azure Government is the clearest path. AWS EKS on GovCloud is a viable alternative for Moderate-level requirements. Google GKE via Assured Workloads supports FedRAMP compliance, but it requires additional configuration overhead and does not provide an isolated government cloud infrastructure equivalent to Azure Government or AWS GovCloud.

For healthcare organizations, all three platforms qualify as HIPAA-eligible services. The differentiating factor shifts to confidential computing capabilities. Both Azure AKS and Google GKE support hardware-based Trusted Execution Environments for container workloads. Amazon EKS does not offer an equivalent native confidential computing capability.

For international enterprises navigating multi-jurisdiction data privacy requirements, Google Kubernetes Engine holds ISO/IEC 27018 (cloud privacy) and ISO/IEC 27701 (privacy management) certifications that provide stronger documented coverage than either EKS or AKS for cross-border data processing scenarios.

Security Architecture Built Into Each Managed Kubernetes Platform

Amazon EKS security is built around AWS-native tooling. IAM-integrated RBAC, EKS Pod Identity for workload authentication, GuardDuty runtime threat detection, VPC CNI networking with Calico network policies, and AWS CloudTrail audit logging form the core security stack. Container image signature verification through AWS Signer adds supply chain protection. For AWS-fluent security teams, this model is coherent and well-integrated.

Azure AKS adds confidential computing nodes for hardware-level workload isolation and enforces governance through Azure Policy, which integrates with your organization's existing Azure security posture rather than requiring a separate policy layer. The Entra ID authentication model is particularly strong for organizations with mature Microsoft identity governance programs.

Google GKE has the strongest security-by-default posture of the three. Nodes run on Google's Container-Optimized OS, a purpose-built hardened operating system with a locked-down firewall, read-only filesystem, and disabled root login. GKE Sandbox uses gVisor kernel isolation to protect the node OS from untrusted workloads. Binary Authorization enforces software supply chain policies by allowing only cryptographically signed images to deploy, with Continuous Validation monitoring running pods in real time. In Autopilot mode, all of these protections are applied automatically without manual configuration.

Which Managed Kubernetes Service Is Right for Your Organization?

Before You Sign: 7 Questions Every IT Leader Should Ask Their Kubernetes Service Provider

Vendor demonstrations show you the best-case path. Your job is to pressure-test the realistic one.

1. What is our true TCO at expected cluster count and node footprint? Ask for a line-item breakdown that includes cluster fees, compute, storage, egress, monitoring, and support tier costs. The headline price is not the operating price.

2. What happens to our budget if we miss a Kubernetes version upgrade cycle? All three platforms charge $0.60/cluster/hour for extended support. Get your team to model the cost at your current cluster count before this scenario occurs.

3. How does this platform's identity model map to our existing IAM infrastructure? The answer determines your long-term migration optionality and your team's day-one configuration workload.

4. What are our data egress costs between zones, regions, and external services? Cross-AZ traffic, regional egress, and internet-bound traffic are billed separately on all three platforms. In multi-region deployments, this line item grows faster than most teams expect.

5. Which compliance frameworks do we need today, and which will we need in three years? Platform compliance coverage evolves. If your organization has a realistic path toward federal contracts or defense work, model that now.

6. What does our team need to operate this platform effectively? Each platform requires different expertise. EKS requires AWS depth. AKS requires Microsoft Azure fluency. GKE requires Google Cloud proficiency. Be honest about your current skill set and what hiring or training gaps exist.

7. What does migrating away from this platform actually cost? Ask your vendor to walk you through what a migration would involve for your identity architecture, networking model, and observability stack. The answer will clarify how much operational lock-in you are accepting.

The right managed Kubernetes service is not the one with the longest feature list or the lowest headline price. It is the one that fits where your organization already lives, your cloud ecosystem, your compliance obligations, your team's skills, and your long-term infrastructure strategy. Choose the platform that compounds what you have built, not the one that requires you to rebuild it.

Looking for Managed Kubernetes Platform?

We'll understand your environment and match you with the vendors worth talking to. Plus a $50 gift card for your time.

FAQ

What is the cheapest managed Kubernetes service: EKS, AKS, or GKE?

The control plane fee is identical across all three at $0.10/cluster/hour. AKS offers a Free tier, but it carries no SLA and isn't suitable for production. GKE Autopilot charges per pod resource request rather than provisioned nodes, making it the most cost-efficient option for variable workloads. EKS has the most complex billing surface — node costs, egress, CloudWatch, and add-on fees all stack on top of the base fee.

How do EKS Auto Mode, AKS Automatic, and GKE Autopilot compare?

All three automate node provisioning and lifecycle, but differ in scope. GKE Autopilot is the most complete — it manages nodes, applies security hardening by default, and bills per pod. EKS Auto Mode enforces a 21-day maximum node runtime, making it better for stateless workloads than long-running AI training. AKS Automatic adds a hosted control plane fee of $116.80/month plus per-vCPU surcharges up to $32.29 for GPU nodes.

Which managed Kubernetes platform is best for FedRAMP and DoD compliance?

Azure AKS on Azure Government is the only option in this comparison with FedRAMP High and DoD IL4/IL5 authorization. DoD IL6 runs on Azure Government Secret. AWS EKS on GovCloud covers FedRAMP Moderate. GKE supports FedRAMP through Assured Workloads but has no dedicated government cloud equivalent to Azure Government or AWS GovCloud.

What does Kubernetes vendor lock-in actually mean in practice?

Your application workloads — deployments, services, Helm charts — are portable. Your operational model is not. EKS ties you to IAM RBAC and CloudWatch. AKS ties you to Entra ID and Azure Policy. GKE ties you to Workload Identity Federation and GKE Fleets. TPU-based AI workloads on GKE carry the highest migration risk — Google TPUs have no equivalent on EKS or AKS.

Is GKE better than EKS for AI and machine learning workloads?

It depends on your hardware. For TPU-based workloads, GKE is the only option — TPUs are exclusive to Google Cloud. For GPU and Trainium workloads at extreme scale, EKS Ultra Scale supports up to 100,000 nodes and 800,000 NVIDIA GPUs in a single cluster, a ceiling GKE doesn't match. GKE's Inference Gateway reduces LLM serving costs by 30%+ and cuts tail latency by 60%, making it the stronger choice for inference optimization.