Why Your Inbound Funnel Looks Healthy But Your Win Rate Says Otherwise

The easiest pipeline to build is the pipeline you are least likely to close. The 2026 B2B conversion problem starts before the meeting — and most vendors have no idea.

If you ask most B2B revenue leaders what their best source of pipeline is, they will say inbound. The buyer reached out. They requested a demo. They downloaded the report. They are warm, they are qualified, and they are ours to close.

The data says otherwise — and the gap between what revenue leaders believe about their inbound funnel and what is actually happening inside it is one of the most expensive blind spots in B2B sales today.

I run a B2B IT matchmaking platform. We deliver thousands of qualified appointments a year between technology buyers and the vendors trying to reach them, and we analyze those conversations at scale through our MatchIQ platform.

What we see consistently is this: vendors are not losing because they are getting bad leads. They are losing because they have built the wrong funnel — one that is structurally optimized for the deals they are least likely to win.

That is not a lead quality problem. It is a pipeline architecture problem. And it starts long before anyone picks up the phone.

The win rate nobody is tracking

According to 2025 research from Emblaze, between 69% and 83% of B2B opportunities are now reactive — meaning the buyer initiated contact. That is the overwhelming majority of most vendors' pipelines. And those reactive opportunities win at rates between 18% and 25%.

Proactive, seller-initiated opportunities — the ones most B2B companies treat as harder, colder, and less efficient — win at rates between 33% and 41%.

The deal that walked through your door is roughly half as likely to close as the deal you went out and built. Sit with that for a moment. It means a vendor whose pipeline is 80% inbound is carrying a structural win-rate penalty on the vast majority of its deals — and most of them do not know it, because they have never separated their pipeline by initiation source.

Most B2B revenue dashboards report deals by stage and by source. Almost none separate reactive from proactive opportunities or track win rates against that distinction.

The first question every revenue leader should be able to answer in 2026 is: what percentage of our pipeline is reactive, and what is our win rate on each side? If you cannot answer that, you do not know what your real pipeline efficiency looks like. You know what your funnel looks like. Those are not the same thing.

The inbound confirmation problem

The reason inbound underperforms is not complicated, but it is uncomfortable.

According to 6sense's 2024 research, 81% of B2B buyer-vendor first contacts are now initiated by the buyer. Their 2025 update went further: when buyers initiate outreach, they overwhelmingly reach out first to the vendor they already intend to buy from.

The inbound demo request is not, in most cases, the start of a buying conversation. It is the start of a confirmation conversation.

The buyer has already done their research. They have already formed a preferred choice. They are reaching out to that preferred choice first — and other vendors are receiving requests as backup options, competitive benchmarks, or internal proof points that the buying committee evaluated more than one option.

The seller running the demo on a buyer-initiated meeting is, statistically, often running a demo for a buyer who is already mentally committed to someone else.

This is what the 18% to 25% win rate on reactive deals looks like in practice. Most reactive deals are second-place auditions. The vendor does not know that going in. The buyer does.

Where the shortlist actually forms

To understand why buyers arrive at first meetings with a preferred choice already in mind, you have to look upstream — before any vendor has been contacted at all.

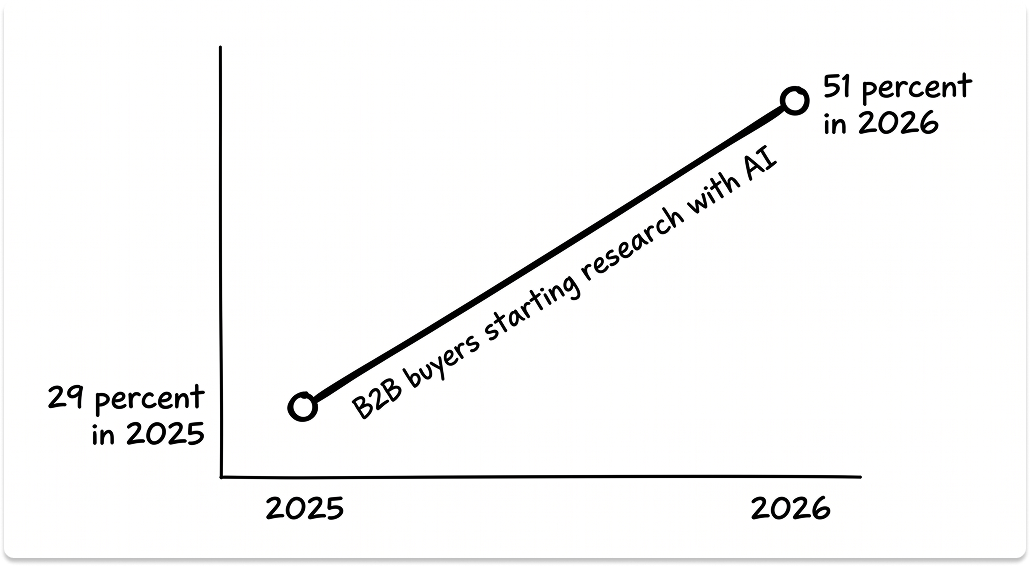

In April 2026, G2 published the results of a survey of more than 1,000 B2B software buyers. The finding that should be reshaping marketing-team priorities everywhere: 51% of B2B buyers now begin their software research in an AI chatbot rather than a traditional search engine. One year earlier, the same survey put that number at 29%.

The starting point of the B2B buyer journey moved, in twelve months, from a search bar to a chat window. There is no precedent in the past decade of B2B marketing for a behavioral shift this fast.

The downstream effects are not marginal. In the same G2 data, 69% of buyers said an AI chatbot led them to select a different vendor than the one they had originally planned to evaluate.

One-third purchased from a vendor they had never heard of before the AI surfaced it. 93% said AI chatbots have fundamentally changed how they conduct research.

The mechanism is now clear. A buyer asks ChatGPT, Claude, Perplexity, or Gemini what the best vendors are for a given category.

The AI returns an answer that typically names three to five vendors, often with brief descriptions of what each is known for. The buyer reads the answer. The shortlist forms. The buyer never sees a search engine results page. The buyer never visits a review site. The buyer never clicks an ad.

By the time that buyer contacts any vendor — if they contact them at all — the shortlist is already set, the comparison is largely complete, and a preferred choice has formed in the buyer's mind.

This is consistent with Forrester's 2025 finding that 61% of the buyer journey now completes before any vendor is contacted. The inbound confirmation dynamic is not a sales problem. It is the output of an AI-mediated discovery process that most vendors are not measuring, not managing, and in many cases not even aware of.

Your AI visibility is shaping your pipeline whether you measure it or not

The first question every CMO should be able to answer in the second half of 2026 is: when a buyer asks an AI tool about our category, what does the AI say about us? Most cannot answer it.

There is no standardized reporting tool for AI-mediated discovery the way there is for SEO. The category is too new, the underlying models change too fast, and the queries that matter are too varied to track with the dashboards that exist today.

But the absence of measurement does not mean the absence of impact.

The optimization rules for AI visibility are different from the ones that govern search — and the difference catches a significant number of well-resourced marketing teams off guard.

Search engines reward backlinks, structured content, and keyword targeting. Large language models reward something subtly different: authoritative third-party citations, ungated long-form content, structured data the model can parse, and consistent presence across the corpus the model was trained on.

A vendor with strong SEO but weak third-party validation — in independent publications, named analyst reports, and active review-site profiles — will often rank well in Google and poorly in ChatGPT. Many vendors are already in this position and do not yet know it.

The competitive implication is equally important. When G2 finds that one-third of B2B software buyers purchased from a vendor they had never heard of before an AI surfaced it, what that means is that AI is introducing buyers to vendors they would not have evaluated in the search-engine era. Your category is now broader.

Vendors you have never tracked as competitors are showing up in the answers buyers are using to build their shortlists. Conversely, if your name is not surfacing in those answers, you are losing deals to vendors you may not even be aware of yet.

The vendors that are starting to shape this layer share a few characteristics. They publish authoritative, ungated content on category-defining topics — the kind of analysis an AI is most likely to cite when answering a category question. They invest in third-party legitimacy: named analyst reports, credible profiles on major review sites, presence in the independent publications that form the corpus these models draw from.

They structure their digital presence — schema markup, well-organized resource libraries, citable data — to be readable by the systems that increasingly mediate buyer attention. And they have started running their own brand-monitoring queries against the major LLMs, treating those answers as a measurable surface their team is responsible for shaping.

The vendors that are losing this layer are the ones still treating AI visibility as a future problem. They are running 2024 demand-generation playbooks against 2026 buyer behavior, watching their inbound funnel weaken, and concluding that the market has slowed. The market has not slowed. The discovery layer the market uses has moved.

What happens inside the meeting makes the gap worse

Once you understand that most inbound is confirmation traffic from buyers who already have a preferred choice, the meeting itself takes on a different character. The seller is not opening a conversation. They are being auditioned against a standard the buyer has already set.

And most reps fail that audition in the same way — for reasons that are structural as much as they are behavioral.

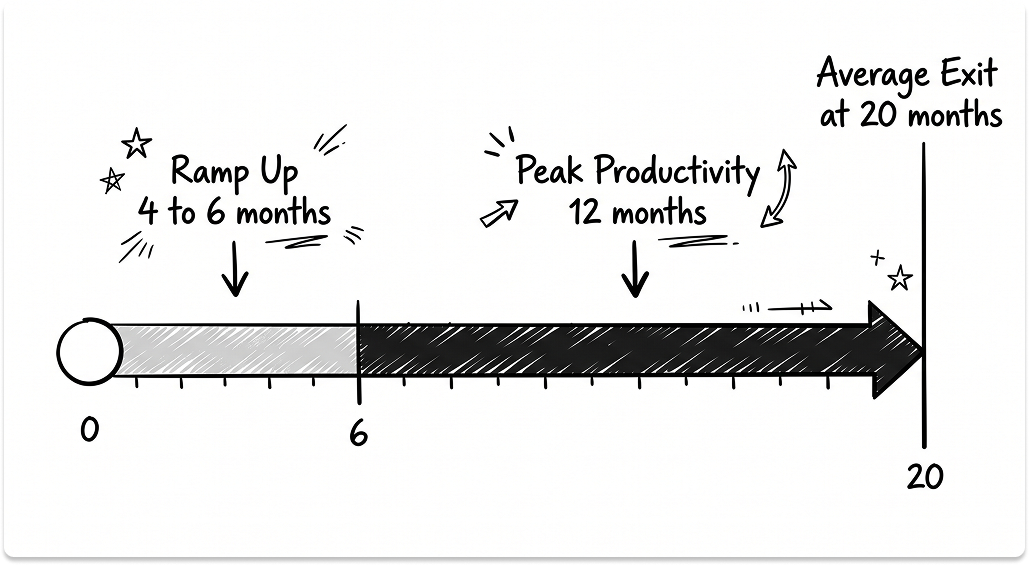

Annual B2B sales team turnover is now 35%, according to Optifai's 2025 benchmark of 939 companies. The average rep tenure has fallen to 18 to 20 months, well below the two-to-three-year window in which sellers historically reach peak performance.

When you subtract the four to six months it takes a rep to reach full productivity, you are left with roughly a twelve-month window in which the average rep is operating at their best. Most sales organizations are not designed for that math. Their hiring strategy, onboarding investment, and retention economics are still built for a world in which reps stayed long enough to fully develop.

What this produces in practice is a sales force that is, on average, less experienced and less consultatively capable than the buyer sitting across from them — a buyer who has done six weeks of independent research, has already asked AI to compare the top vendors in the category, and arrives expecting a strategic conversation about their specific problem.

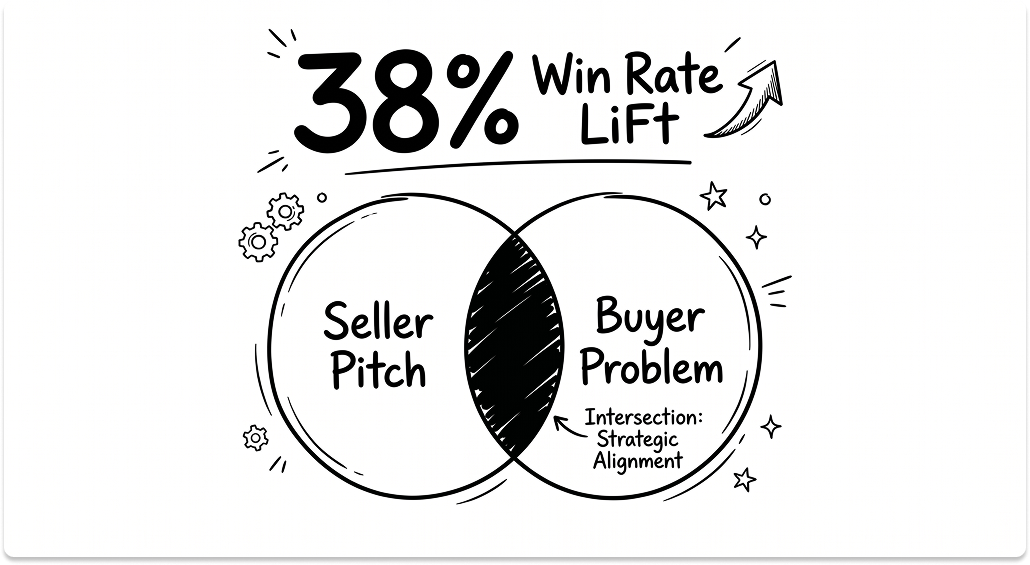

Emblaze's research found a 54.5% misalignment between how sellers and buyers perceive the core problem the buyer is trying to solve. That number is striking on its own. What makes it commercially significant is what happens when the misalignment is corrected: when sellers and buyers do align on problem definition, win rates improve by 38%.

That is the highest-ROI behavioral change I have seen documented anywhere in B2B sales. It does not require new technology. It does not require new headcount. It does not lengthen the sales cycle. It requires asking better questions earlier in the meeting — and actually listening to the answers.

Most sales organizations are not measuring whether this is happening. They measure whether the meeting occurred, whether next steps were set, and whether the rep submitted notes to the CRM. Almost none measure talk ratio, problem articulation quality, or buyer-seller alignment on the core problem. Metrics drive behavior. The metrics have not changed, so the behavior has not changed either.

What this means in practice is that a vendor competing for a backup-position inbound deal — already behind the buyer's preferred choice — is sending in a rep who has a better than even chance of misreading what the buyer actually wants to solve. The 18% to 25% win rate on reactive deals reflects both of these dynamics compounding each other.

How the three failures connect

These are not three separate problems. They are one problem with three visible symptoms, and they reinforce each other in a way that makes each individual fix less effective without the others.

A vendor that is invisible in AI feeds the reactive trap directly. The buyer asks an AI for the top vendors in the category. The vendor's name does not surface. The buyer builds a shortlist without them.

If the vendor receives an inbound request at all, it arrives as a backup inquiry — and the seller is already competing from second or third position. A rep who leads with a feature pitch rather than a discovery question confirms the buyer's pre-existing preference for whoever came first. The deal is gone before anyone has diagnosed why.

The vendors pulling ahead in 2026 have absorbed something the rest of the market has not: in an AI-mediated, buyer-led environment, the funnel that comes to you is not the funnel that is actually closing.

Also read: Why Your Sales Team Struggles to Convert Qualified B2B Appointments

Four operational moves close most of the gap

First, measure pipeline by initiation source, not just by stage. Most B2B revenue dashboards do not separate reactive from proactive opportunities or track win rates against that distinction. Building that separation into your CRM and your reporting is the prerequisite for everything else. The metric that matters is closed-won revenue per qualified meeting, separated by initiation source. Until that number exists, you are managing a funnel you cannot see clearly.

Second, invest in the proactive motion that actually outperforms. This does not mean the traditional cold-outreach model — the fifty-emails-a-day approach that has been losing effectiveness for a decade. It means proactive engagement built on validated signals: real buying intent, real timing, real fit. Matched demand programs, intent-driven outreach, and account-based motions that begin before the buyer has formed their AI shortlist all fall into this category. The goal is to be in the buyer's consideration set early enough to shape it — not to show up in it as an afterthought.

Third, build AI visibility as a measurable marketing function. Run your own category queries against the major LLMs on a regular cadence. Treat those answers as a brand surface your team is accountable for. Invest in the inputs LLMs reward: ungated authoritative content, third-party legitimacy, structured and citable digital presence. The vendors shaping their AI visibility in 2026 will be the vendors the AIs of 2027 quote by default. The vendors ignoring it will continue to wonder why their pipeline keeps thinning while their search rankings hold steady.

Fourth, redesign rep economics for the tenure reality that actually exists. If your reps are leaving in 18 months and reaching full productivity in four to six months, you have a twelve-month productive window per rep. Hiring strategy, onboarding investment, and retention programs need to be designed for that reality — not the reality of five years ago. The 38% win-rate lift from problem alignment is fully coachable. But it requires enough time in the role to develop, and most organizations are cycling through reps faster than that skill ever has a chance to form.

What I want CEOs and CROs to take from this is not that inbound is bad. Inbound is real demand, and it should be converted as efficiently as possible. The problem is treating inbound volume as a proxy for pipeline health, and inbound conversion as a complete revenue strategy.

A growing inbound funnel can mask declining pipeline conversion. If inbound volume is up 30% and inbound win rate is down 30%, you have not gained anything — and you may have lost ground, because you are spending more time on conversations that are statistically unlikely to close. The funnel looks healthy on the dashboard. The revenue math underneath it does not.

Pipeline is what you go out and build. Inbound is what comes to you. In 2026, those are not the same funnel. And the companies that still treat them as the same will keep wondering why their best-looking pipeline is also their worst-converting one.

If they came to you easy, you probably came to them second.

Looking to Connect With Active IT Buyers?

We'll get you on calls with IT buyers who are actively looking for IT solutions, each one manually verified for intent, with budget allocated for your specific solution.

FAQ

Isn't inbound a signal of strong brand awareness? Why would we deprioritize it?

Inbound is real demand and it should be converted as efficiently as possible. The problem is not inbound itself — it is treating inbound volume as a proxy for pipeline health. A growing inbound funnel with a declining win rate means you are adding cost and activity for the same or worse output. The goal is to build both motions: convert inbound well, and build proactive pipeline that reaches buyers before their shortlist is set.

How do we find out what an AI says about us in our category?

Run your own queries. Open ChatGPT, Claude, and Perplexity and ask the questions a buyer in your category would ask: "What are the best vendors for [your category]?" or "Compare [your category] solutions for [your buyer's use case]." Record what comes back, note which vendors are named and in what context, and do this on a regular monthly cadence. Treat those answers as a brand surface your team is accountable for, the same way you would treat an SEO rank report.

What does proactive pipeline actually look like in 2026? Isn't cold outreach dead?

Volume-based cold outreach — the fifty-emails-a-day model — has been losing effectiveness for years and does not produce the 33–41% win rates proactive deals achieve. What does work is outreach built on validated buying signals: a budget cycle opening, a contract renewal approaching, a hiring pattern that signals an infrastructure investment is imminent. When you reach a buyer at that moment with a specific, relevant message, you are not interrupting them. You are arriving when they need you, before the AI shortlist has formed and before a competitor has become their preferred choice.

We already track pipeline by source in our CRM. Isn't that the same thing?

Source and initiation type are different fields. Most CRMs record whether a deal came from paid, organic, referral, or outbound — but they do not separate who initiated the first contact, or track win rates against that distinction specifically. The metric that matters is closed-won revenue per qualified meeting, separated by whether the buyer or the seller made the first move. If your CRM cannot produce that report today, you do not yet have visibility into your real pipeline efficiency.

Our reps are experienced. Why would problem misalignment still be an issue for us?

Emblaze's 54.5% misalignment rate is not a seniority problem — it is a measurement problem. Most sales organizations track meeting volume, next steps, and CRM notes. Almost none track whether the seller and buyer ended the meeting with the same mental model of the core problem being solved. Experience helps, but experience without measurement does not self-correct. The 38% win-rate improvement from problem alignment is coachable at any tenure level. It starts with tracking talk ratio and problem articulation quality in first meetings, not just whether the meeting happened.